Install Chilkat for Node. Replace 'YOUR_TABLE_NAME' and 'YOUR_FIELD_NAME' with the name of the table and field that contains the image attachmentĬonst table = base.getTable('YOUR_TABLE_NAME') Ĭonst record = await input.recordAsync('Process PSDs', table) Ĭonst attachment = record.getCellValue('YOUR_FIELD_NAME') Ĭonst attachmentName = record.getCellValue('fileName') Ĭonst imageData = await remoteFetchAsync(imageUrl, ).then(response => response.(Node.js) S3 Upload a File with Public Read Permissionsĭemonstrates how to upload a file to the Amazon S3 service with the x-amz-acl request header set to "public-read" to allow the file to be publicly downloadable.

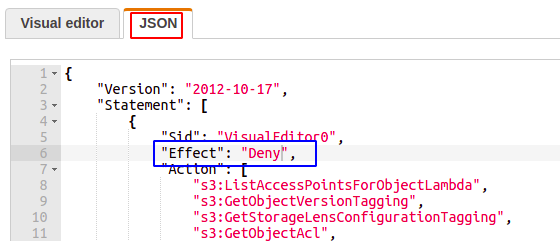

In its most basic sense, a policy contains the following elements: Resource The Amazon S3 bucket, object, access point, or job that the policy applies to. Replace 'YOUR_API_GATEWAY_URL' with the URL of your API Gateway endpoint For a complete list of Amazon S3 actions, resources, and conditions, see Actions, resources, and condition keys for Amazon S3. So I can now upload directly from any airtable base straight into S3 with the simple script below (created with help from chat GPT): This allows me to put image attachments to pbposters-images/input using "input**/ name.jpg" becomes "input %2f**name.jpg". It took me a little while to figure out the solution, but the trick is to use the URL encoded form of the backslash in the URL which %2f i.e. So when you try to put an object with the key including the backslash, the API gateway sees it as a new path, and you get the Missing Token error as that path doesn't exist. The other issue is the API gateway uses the same backslash (/) to split a path up. It simulates a folder in the GUI using the backslash (/) in the key name, but actually all it's doing is creating an object called "input/name.jpg", rather than an object called "name.jpg" in the "input" folder. After you edit S3 Block Public Access settings, you can add a bucket policy to grant public read access to your bucket. "The problem with trying to create an item in the S3 "input" folder is S3 doesn't really have folders in the underlying bucket, only objects. To make the objects in your bucket publicly readable, you must write a bucket policy that grants everyone s3:GetObject permission. permissions Moderate users Custom group-level project templates Group. However, there was an issue with uploading to a specific bucket and 'folder' which I got some help with. Image scaling Memory-constrained environments Release process Maintain. For this reason, grant cannot be mixed with the external awss3bucketacl resource for a.

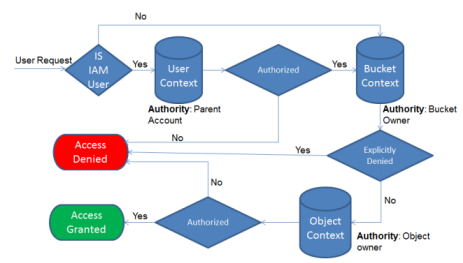

If you use grant on an awss3bucket, Terraform will assume management over the full set of ACL grants for the S3 bucket, treating additional ACL grants as drift. However, the user could look at the HTML code and still access the image and URL. To manage changes of ACL grants to an S3 bucket, use the awss3bucketacl resource instead. Some web apps 'hide' the URL inside strange HTML elements, such as putting a transparent image on top, so users cant right-click and save the image. Permissions and roles are a little tricky but doable as a no/low coder and again well documented. If the URL is accessible, they can do anything with it. You then create AWS Identity and Access Management IAM users in your AWS account and grant those users incremental permissions on your Amazon S3 bucket and the folders in it. In this example, you create a bucket with folders. This was actually quite straightforward and well-documented on youtube. This walkthrough explains how user permissions work with Amazon S3. The solution I found was creating an AWS API gateway and allowing put requests to an S3 bucket.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed